How AI’s energy constraints are driving the infrastructure investments that will enable ubiquitous computing

In 1865, economist William Stanley Jevons observed a paradox in Britain’s coal industry: as steam engines became more efficient and coal-powered technology advanced, total coal consumption increased rather than decreased. More efficient engines didn’t reduce coal demand—they made coal-powered applications economically viable in new contexts, from railways to factories to ships. The same pattern has repeated across every major energy transition since. As automobile engines became more fuel-efficient, total gasoline consumption soared because cheaper per-mile costs enabled more driving, more cars, and more applications for transportation.

While Jevons’ paradox doesn’t apply universally—many efficiency gains do reduce total consumption—computing has consistently followed this pattern. Personal computers didn’t reduce total computing energy use; they made computation cheap enough for everyone. The internet didn’t decrease data transmission; it made information sharing ubiquitous.

This paradox is now playing out with artificial intelligence at unprecedented scale and speed. According to Lawrence Berkeley National Laboratory and Department of Energy reports, US data centers consumed 176 terawatt-hours of electricity in 2023, up from 58 TWh in 2014. Even as researchers develop dramatically more efficient AI architectures, total energy consumption continues climbing because lower costs per computation unlock new applications, more users, and ubiquitous deployment.

The efficiency breakthroughs emerging from today’s energy constraints will not reduce AI’s total resource footprint—they will explode it by making artificial intelligence cheap enough to embed everywhere.

The Solar Reality

Nick Bostrom’s famous thought experiment imagined an AI system single-mindedly focused on maximizing paperclip production, eventually converting all available matter—including humans—into paperclips. The scenario illustrates how an AI system optimizing for one goal might pursue that objective with devastating single-mindedness. But perhaps a more realistic scenario is already unfolding: an energy-maximizing economy steadily converting Earth’s surface into solar collection infrastructure.

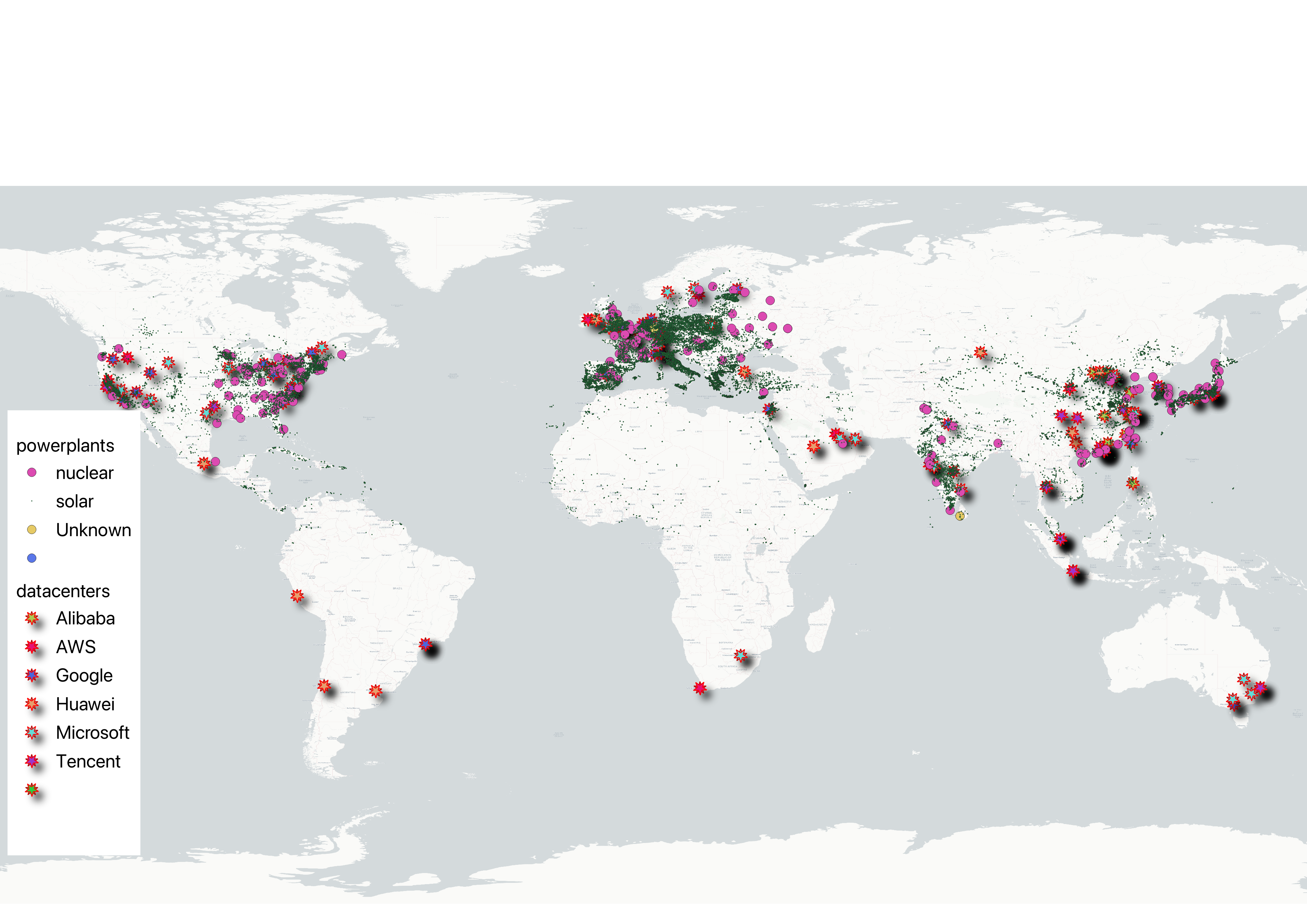

If you map global energy infrastructure, solar installations now blanket the developed world with startling density across North America, Europe, Asia, and increasingly Africa. Hide the solar data and the underlying pattern emerges more clearly: data centers cluster strategically near power sources and urban centers, following the fundamental rule of industrial geography. Overlay the solar data again, and Bostrom’s optimization scenario seems less like science fiction and more like economic inevitability.

This geographic clustering reveals the deeper constraint driving today’s infrastructure boom: the competition between AI’s voracious energy appetite and available supply.

The Constraint Phase

The current numbers illustrate the scale of this transformation. Training a large language model like GPT-3 requires approximately 1,287 megawatt hours of electricity, equivalent to the annual power consumption of about 130 US homes, according to research published in Communications of the ACM. Running these models to serve millions of users demands still more. The infrastructure response has been dramatic: The Stargate Project, announced by OpenAI, is a joint venture between SoftBank, OpenAI, Oracle, and MGX that intends to invest $500 billion over four years building new AI infrastructure in the United States. Google announced $75 billion in capital expenditures for 2025 to expand its AI infrastructure and data centers, according to company earnings reports.

These investments reflect genuine scarcity. New electrical transmission projects now face delays of several years in many regions due to permitting, environmental reviews, and construction bottlenecks. Major utilities have paused new connections until grid capacity can be expanded. In Ireland, data centers consumed 21% of the country’s total metered electricity in 2023, leading EirGrid to impose a moratorium on new data center connections in Dublin until 2028, according to Central Statistics Office data and EirGrid announcements.

The result is a global scramble for land that offers both reliable power and proximity to users. Hyperscalers—the handful of technology companies that operate the world’s largest data centers, including Google, Microsoft, Amazon, and Meta—position their facilities strategically near power sources and urban centers.

The economic consequences are profound, with industrial land in prime data center markets experiencing dramatic price increases as developers compete for power-accessible locations.

The Market Response

To analyze how financial markets have recognized this infrastructure transformation, we collected stock price data from Yahoo Finance for key companies in the data center ecosystem. The results reveal a clear pattern: while technology stocks grabbed headlines, the infrastructure that enables artificial intelligence has delivered remarkable returns.

Iron Mountain, which provides data storage and backup services, has gained 349% since January 2020. Johnson Controls, which supplies cooling and building automation systems essential to data centers, has risen 114%. The largest data center real estate investment trusts (REITs)—Digital Realty Trust and Equinix—have gained 79% and 78% respectively. Even Jones Lang LaSalle, the property services firm that facilitates many data center deals, has risen 44%.

These returns reflect market recognition that AI’s growth depends on physical infrastructure that cannot be conjured from venture capital alone. Data centers require specialized cooling systems, uninterruptible power supplies, and proximity to electrical substations capable of delivering tens of megawatts continuously.

The Innovation Response

As Jevons showed, efficiency breeds demand—and AI is no exception. Current constraints are spurring the very innovations that will eventually explode total demand. Neuromorphic chips, which mimic the brain’s neural architecture, promise thousand-fold improvements in energy efficiency over conventional processors. Intel’s Hala Point neuromorphic system demonstrates processing speeds exceeding 15 trillion 8-bit operations per second per watt—performance that rivals graphics processing units while consuming dramatically less power, according to Intel Corporation specifications.

Model compression techniques offer another path forward, with research showing significant energy reductions without sacrificing accuracy. Even AI’s architectural approach is shifting: OpenAI’s latest models combine neural networks with symbolic reasoning—exactly the hybrid approach that pure scaling advocates had dismissed.

The efficiency drive is also producing smaller, more specialized models. Corporate users are increasingly turning to small language models (SLMs) with fewer parameters that can run on cheaper hardware and consume less energy than their massive counterparts. These models, often trained by larger systems, can handle specific tasks—from processing receipts to powering chatbots—without requiring the computational overhead of general-purpose AI. Early adopters find that targeted efficiency often beats brute-force intelligence.

But the efficiency paradox suggests these breakthroughs will follow the familiar pattern: making AI cheaper and more accessible will expand its applications exponentially. Bostrom’s optimization scenario becomes more plausible when viewed through this lens—each efficiency breakthrough doesn’t reduce total resource consumption but makes AI viable for new applications, expanding the infrastructure footprint.

The Ubiquity Phase

The path to ubiquitous AI will not be linear. History suggests technological adoption follows cycles of boom, speculation, bust, and eventual rational deployment. Britain’s railway mania of the 1840s offers the clearest parallel: massive overinvestment in tracks, stations, and rolling stock, followed by financial collapse in the 1860s that left abandoned lines and bankrupt companies scattered across the countryside. Yet by the early 1900s, railways had indeed transformed British society—not through the original speculative ventures, but through the consolidated, efficient networks that emerged from the wreckage.

AI infrastructure may follow a similar arc. Today’s frantic buildout of data centers and solar farms could leave stranded assets—empty facilities in the wrong locations, obsolete chip architectures, overbuilt transmission lines. Some metropolitan areas that attract current investment may prove economically unsustainable when more efficient systems emerge. But this cyclical process doesn’t invalidate the long-term trend; it simply makes it more volatile.

The efficiency innovations emerging from current constraints will enable AI to spread to applications currently beyond economic reach. Cheap, efficient AI will embed in cars, appliances, sensors, clothing, and devices not yet imagined. Each individual application will consume less energy than today’s data centers, but the aggregate demand from billions of AI-enabled devices will dwarf current consumption.

The geography of this expansion is taking shape. Energy-rich regions with cold climates attract the largest facilities, while urban areas near fiber-optic networks host infrastructure serving end users. The Nordic countries offer hydroelectric abundance and arctic temperatures. Texas provides cheap wind and solar power. Singapore serves as Asia’s hub despite expensive electricity.

This geographic arbitrage reflects what researchers call “climate arbitrage”—positioning facilities in cooler climates to reduce cooling costs while accessing cheaper renewable energy. The European Research Council’s GEOCLOUD project documents how hyperscale data centers cluster in strategic regions as governments balance digital sovereignty against technological dependence.

Market Patterns

The data reveals how markets have already begun pricing in this infrastructure expansion. Companies building today’s energy-intensive facilities, providing the cooling and power management systems they require, and facilitating the real estate transactions that make expansion possible have outperformed the broader technology sector. The stock performance pattern shows this clearly: specialized infrastructure companies have delivered superior returns compared to the AI companies that receive more attention.

This pattern suggests that the physical foundation of the AI revolution may prove as valuable as the software innovations driving headlines. The data center REITs, building systems providers, and specialized infrastructure companies represent positions in what appears to be the early stages of a multi-decade infrastructure transformation.

The Material Reality

The artificial intelligence revolution, like every industrial transformation before it, remains anchored to physical constraints. Current AI energy consumption represents not a problem to be solved through efficiency alone, but the first stage of a pattern that has driven technological progress for centuries. The resource hunger creates the economic pressure that forces the innovations that enable ubiquity.

The efficiency paradox, viewed through the lens of AI development, suggests that efficiency breakthroughs will not slow infrastructure expansion but accelerate it. Understanding this cycle—constraint, innovation, expansion—provides insight into how the next phase of development may unfold across an increasingly energy-intensive digital economy.

Bostrom’s paperclip maximizer scenario becomes less a cautionary tale about artificial intelligence and more a description of how economic systems respond to technological possibility. As efficiency makes AI applications cheaper and more viable, the total infrastructure footprint will continue expanding across an increasingly connected planet. The journey may prove more cyclical than linear, with boom and bust cycles reminiscent of the railway age, but the destination remains the same: a world where artificial intelligence, enabled by vast physical infrastructure, becomes as ubiquitous as electricity itself.

Sources:

Data Center Energy Consumption:

“2024 United States Data Center Energy Usage Report” – Lawrence Berkeley National Laboratory

Ireland Data Center Statistics:

“Data Centres Metered Electricity Consumption 2023” – Central Statistics Office Ireland

EirGrid Dublin Moratorium:

“Data centres get to grips with EirGrid’s Dublin pause” – RTÉ

https://www.rte.ie/news/business/2022/0116/1273819-data-centres-eirgrid/

AI Training Energy Requirements:

“The Energy Footprint of Humans and Large Language Models” – Communications of the ACM

https://cacm.acm.org/blogcacm/the-energy-footprint-of-humans-and-large-language-models/

Stargate Project:

“Announcing The Stargate Project” – OpenAI

Google AI Investment:

“Google expects 2025 capex to surge to $75bn on AI data center buildout” – Data Center Dynamics

Intel Hala Point Specifications:

“Intel Builds World’s Largest Neuromorphic System to Enable More Sustainable AI” – Intel Newsroom

Additional Data Sources:

OpenStreetMap – Global infrastructure location data

Yahoo Finance – Stock price data for infrastructure companies

ERC GEOCLOUD project (https://diesl.eu/geocloud/) – Data center geographic clustering research

Leave a Reply