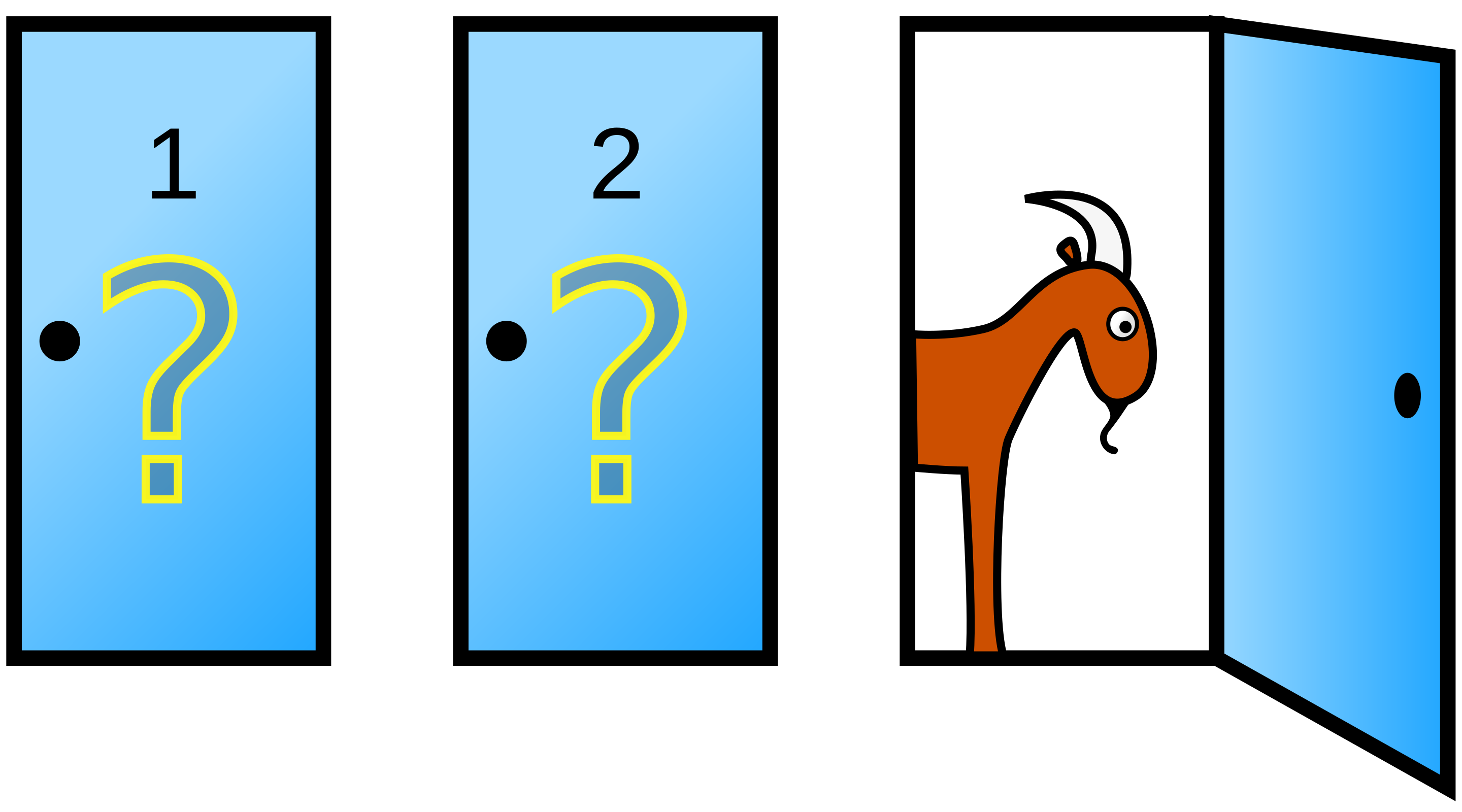

Even brilliant minds can be led astray by probability puzzles. When presented with the Monty Hall Problem, renowned mathematician Paul Erdős initially rejected the correct solution – and he wasn’t alone. Thousands of readers, including PhDs in mathematics and statistics, wrote angry letters to Marilyn vos Savant when she published the correct solution in Parade magazine. Their passionate resistance reveals something fascinating about how humans reason about uncertainty.

To explore these ideas hands-on, we’ve created a Jupyter notebook that implements both traditional and probabilistic programming approaches to the Monty Hall Problem. The notebook includes code for simulating the game, modeling player behavior, and analyzing how people learn from experience.

(more…)